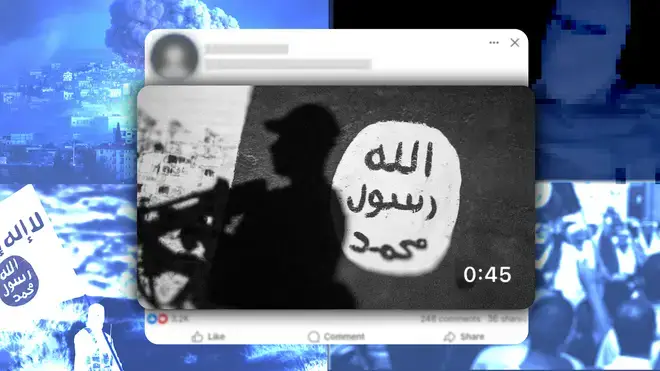

Our investigation also revealed that infamous Islamic State execution videos from the peak of its power were available on Facebook – for more than 10 years

The graphic and disturbing footage showing these murders is not being removed by Facebook quickly – some of the videos were hosted on the platform for months, with one remaining on the site for more than a decade.

After LBC contacted Facebook's parent company Meta about our findings, it removed the videos and the users who uploaded the videos from the platform.

One of the videos LBC found showed a small child of around 11 years old with a pistol shooting two people in the head before shooting their bodies multiple times while they're on the ground. It is intended as a warning to people not to spy on the terror organisation.

Another, with slick production values, appeared to have been created as promotional content for the Islamic State. The beginning of the video was animated, showing a person moving pieces around a map of the Middle East while a voiceover makes threats against Israel and Jewish people.

Following this, it leads into footage of fighters firing weapons and brief glimpses of graphic content. The video ramped up in intensity towards the end, explicitly showing an individual being shot in the head, before ending with disturbing footage of a person's throat being slit.

Facebook's parent company Meta, in response to being made aware by LBC of these videos being hosted on the platform, insisted that "there is no place on our platforms for people or content that promotes violence or terrorism".

It added that the company removes "all representation, glorification and support when we become aware of it".

But with some videos being hosted by platforms such as Facebook for weeks, months or even years, calls are now being made for both tech companies and the UK government to do more to clamp down on content that glorifies terrorism and terrorist organisations.

But Jonathan Hall KC, a senior barrister and the Government's designated Independent Reviewer of Terrorism Legislation, says that tech companies – and the UK government – are gaslighting the public on issues such as this.

Mr Hall told LBC that there isn't enough incentive for big tech to remove terrorism content from its platforms, as it benefits commercial interests.

He added that the UK is not currently able to protect vulnerable people or block terrorism content – despite current terrorism legislation and the newly introduced Online Safety Act.

Mr Hall said: "The public is being gaslit by the tech companies and, I regret to say, by Government.

"Tech platforms have little incentive to remove terrorism content – their commercial interests are served by circulating content, not by removing it – and the Government wants to claim that the UK is the safest place to be online, irrespective of reality.

"Until we have an honest assessment of how tech companies operate, and an honest assessment of the limits of the Online Safety Act 2023, the public, parents, children – all of us will be losers.

"The UK is a huge market for advertisers, who pay millions to tech companies, but I am afraid that the UK's commercial weight has not yet been translated into an ability to protect the vulnerable or block this sort of material which has zero free speech value."

Our investigation also revealed that infamous Islamic State execution videos from the peak of its power were available on Facebook – for more than 10 years.

One video showing the murder and decapitated corpse of journalist James Foley had been on the platform since 2014.

Mr Foley was killed by British terrorist Mohammed Emwazi, also known as "Jihadi John", in 2014 in retaliation for US airstrikes on Islamic State fighters in Iraq.

As well as Islamic State videos, we also discovered a video showing members of the Nigeria-based Islamist terror group Boko Haram murdering three people that had been uploaded in 2019.

Much like the Islamic State, Boko Haram is a designated terrorist organisation in the UK.

As per Facebook's own content moderation guidelines, it warns users against posting "videos of people, living or deceased, in non-medical contexts, depicting:

It also states that it will not allow content from terror organisations – which it claims face "the most extensive enforcement", adding that "we believe that these entities have the most direct ties to offline harm."

Meta uses artificial intelligence to detect video, audio, text, and graphics such as logos and depictions of violence. It also uses human researchers with expertise in law enforcement, national security, counterterrorism intelligence and academic studies in radicalisation.

It works with other tech companies, think tanks, and governments to tackle terror content on its platforms.

In response to LBC's investigation into terror content hosted on its platforms, a spokesperson for Meta said: "This article is based on five videos that altogether had fewer than a thousand views, and in the first half of this year, we proactively took action on over 19 million pieces of terrorism content on Facebook and Instagram – nearly 99 per cent before it was reported to us."

The Online Safety Act is a new law that seeks to protect citizens online and puts greater duties on social media platforms to make them more responsible for the safety of their users.

It requires companies to take "robust action" against illegal content and activity by putting systems in place to reduce the risk of their platforms being used for illegal offending. Not only are they expected to remove illegal content, they also need to consider how to stop it from appearing at all.

Terrorism is one of the types of illegal content that falls under the Online Safety Act, however, regulator Ofcom states that not all content relating to terrorism is illegal in the UK.

Examples of illegal terrorism content include: encouraging terrorism or amounts to the dissemination of terrorist materials, saying or showing that the person posting is a member of a terrorist organisation proscribed by the UK Government, and depicting an image of an item of clothing or some other article, such as a flag or logo in a way which would lead the viewer to suspect that the person posting the content is a member or supporter of a proscribed organisation.

An Ofcom spokesperson said: "Duties on tech firms under the Online Safety Act to protect UK users from illegal terrorist content came into force this year. That means if a post is reported to a platform now, the platform must decide whether the content is illegal under UK law, and take it down swiftly if it is.

"Not all content relating to terrorism is illegal under UK laws. Where content is legal but harmful to children, including material that’s violent or incites hatred, tech firms must reduce the risk of children encountering it.

"Our job is to make sure sites and apps have appropriate measures in place to comply with their duties, and we’ve shown we’ll take swift enforcement action if evidence suggests companies are falling short. We’ve launched investigations into over 70 platforms, and expect to announce more in the coming weeks and months."